Tokens and Usage Costs Will Soon Become Critical

Right now, several forward-thinking companies are already using Claude Enterprise to build custom knowledge bases, integrate their existing tools, and save hundreds of thousands of dollars per year (with some large organizations reporting hundreds of thousands of hours saved annually, translating to massive cost reductions). But this is quickly moving from an early-adopter advantage to a mainstream necessity.

Automating a big chunk of your work is expensive—but who exactly are these expenses being paid to? And what's the real ROI?

As we've built our internal systems at Avant, it's become clear that these costs flow through several layers of a massive "AI onion". Claude is our current workhorse (the core LLM layer). Google Workspace (or Microsoft 365) serves as the supporting data storage and productivity tool layer. Then come the infrastructure pieces: Vercel for hosting customer websites and apps, Supabase for storing data in those apps, or the Groq API for lightning-fast inference on open-source models like Meta's Llama. Perplexity API for more heavy duty research queries being done by agents as part of workflows. Firecrawl for scraping websites to find more info. Claude code, codex, kimi code running in VS Code or Antigravity for building workflows, automations and applications.

No idea what I am yapping about? Well, I believe you should have an idea, and I can help you learn the lingo. Or you can use YouTube and spend a few hours a day teaching yourself. Or you can get left behind and fail. I believe this discourse about practical AI use will be as essential to business success as basic math and accounting are today.

If every employee gains an army of AI agents and becomes substantially more productive, your role as a manager changes dramatically. You used to be the maestro conducting a team of specialists. Janet was your star trumpet player in HR; Henry was your French horn virtuoso in payroll and bookkeeping. Now everyone shifts up a level. The menial tasks are automated, and Janet and Henry now guide autonomous AI agents, manage outputs, keep files organized, and apply the human judgment that LLMs still can't fully replicate.

In short, every employee becomes a maestro in their own right. As a leader, you must give them what they need to succeed: (1) competitive pay, and (2) the right resources.

The Looming Cost of AI Infrastructure

I believe the second part of that equation will soon become far more expensive. Instead of just running PowerPoint, your team will be running massive, energy-hungry AI models on a computing scale that is staggering at the macro level. To put this in perspective, data centers powering today's AI boom are projected to consume 6.7–12% of total U.S. electricity by 2028

. And honestly, 12% is a very low estimate, it will be closer to 20% unless something changes.

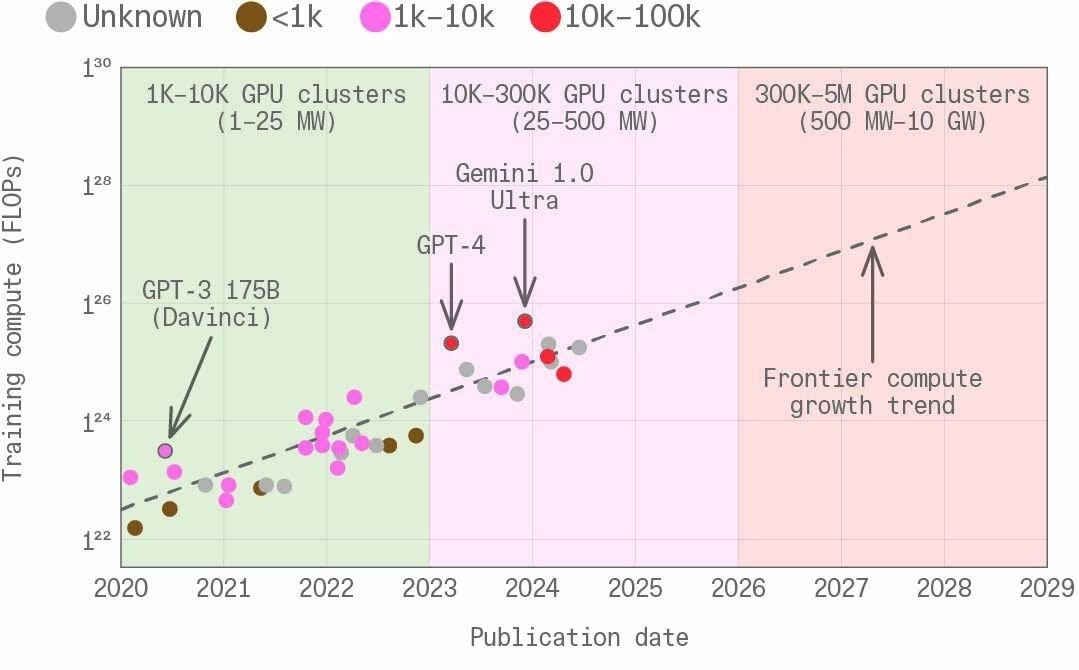

GRAPHIC 1: The Staggering Scale of AI Infrastructure (Adapted from The Scaling Era, Figure 9: Three of the inputs to frontier model training)

Visualizing the Trend: If you map the trajectory of AI models from 2020 out to 2029, you see a steep, exponential climb across three axes:

- Compute (FLOPs): Skyrocketing exponentially year-over-year.

- Hardware: Scaling from clusters of 1,000 GPUs to over 10 million H100-equivalents.

- Power (MW): Moving rapidly from Megawatts into the Gigawatt (GW) range. By 2026, AI clusters could reach 1 GW (the size of a large nuclear reactor), and by 2030, a $1 trillion cluster could consume 100 GW—over 20 percent of U.S. electricity production.

Takeaway: This macro-level energy and hardware demand will eventually filter down to drive up end-user costs, fundamentally altering the economics of your business's software stack.

A realistic per-employee scenario in 5 years: company laptop (~$2,000), company phone (~$2,000 annually), and AI subscriptions plus heavy usage ($10,000–$15,000+). Mark my words.

Right now, however, AI is still remarkably affordable for early adopters. On an absolute basis they are cheap, but on a relative basis (relative to the ROI of AI for the customer, relative to the AI cost of providing AI) they are basically free. Companies desperately need users, so they're accepting unfavourable economics—just like Uber and Amazon did in their growth phases. Today's AI leaders are following the classic venture capital playbook: raise massive funding, spend aggressively to capture users, and monetize later. We are currently in a bubble where overfunded companies are heavily subsidizing access. Small business owners should take full advantage of this window. It appears to be closing rapidly.

With a war in Iran, the oil price volatility that has insured and the worst quarter in stocks in 4 years, the window is closing quicker than I thought. For example, the collapse of OpenAI's Sora video platform has been swift and brutal. What began as Sam Altman's bid to dominate creative AI ended as a cautionary tale about the gap between hype and economics. As The Wall Street Journal reported in its opening assessment, "Sam Altman hoped Sora would turn OpenAI into a creative pioneer. Instead, it looks like an expensive strategic miscalculation"

. The platform, which burned through roughly $1 million per day while hemorrhaging users, was abruptly shut down in late March 2026—leaving even major partners like Disney blindsided by the reversal.

AI's hundreds of billions in funding can only sustain unprofitable growth for so long. Currently, the economics favour the user—the early adopter who learns these tools now, before pricing reflects true market value. The majority will face a steeper learning curve and higher costs in 2–5 years, learning out of necessity rather than curiosity. Like Excel, Quickbooks, and Salesforce before it, AI is already delivering 10x productivity gains to early power users. As it becomes more accessible and accepted, those who wait won't simply pay more; they'll be playing catch-up in a landscape where AI fluency is simply assumed.

At the SMB level, firms who invest and adapt to this new world will enjoy a lasting competitive advantage.

However, cheaper access does not automatically translate into business value.

In fact, recent data from the MIT State of AI in Business 2025 report shows that a staggering 95% of generative AI pilots currently deliver zero measurable P&L impact or financial return

. Furthermore, instead of acting as a magical shortcut, DIY AI implementations frequently intensify workloads; Harvard Business Review research shows that AI can cause employees to take on more tasks and extend their hours rather than actually reducing their burden

.

You can use subsidized AI costs to your advantage right now, but because most DIY AI implementations fail to yield a positive ROI, businesses need guided, expert implementation from Avant to unlock actual productivity gains.

Sources:

: 2024 United States Data Center Energy Usage Report, Lawrence Berkeley National Laboratory, U.S. Department of Energy, January 2025.

: The Sudden Fall of OpenAI's Most Hyped Product Since ChatGPT, The Wall Street Journal, March 29, 2026.

: The GenAI Divide: State of AI in Business 2025, MIT NANDA Initiative, August 2025.

: AI Doesn't Reduce Work—It Intensifies It, Ranganathan, A. & Ye, X.M., Harvard Business Review, February 9, 2026.